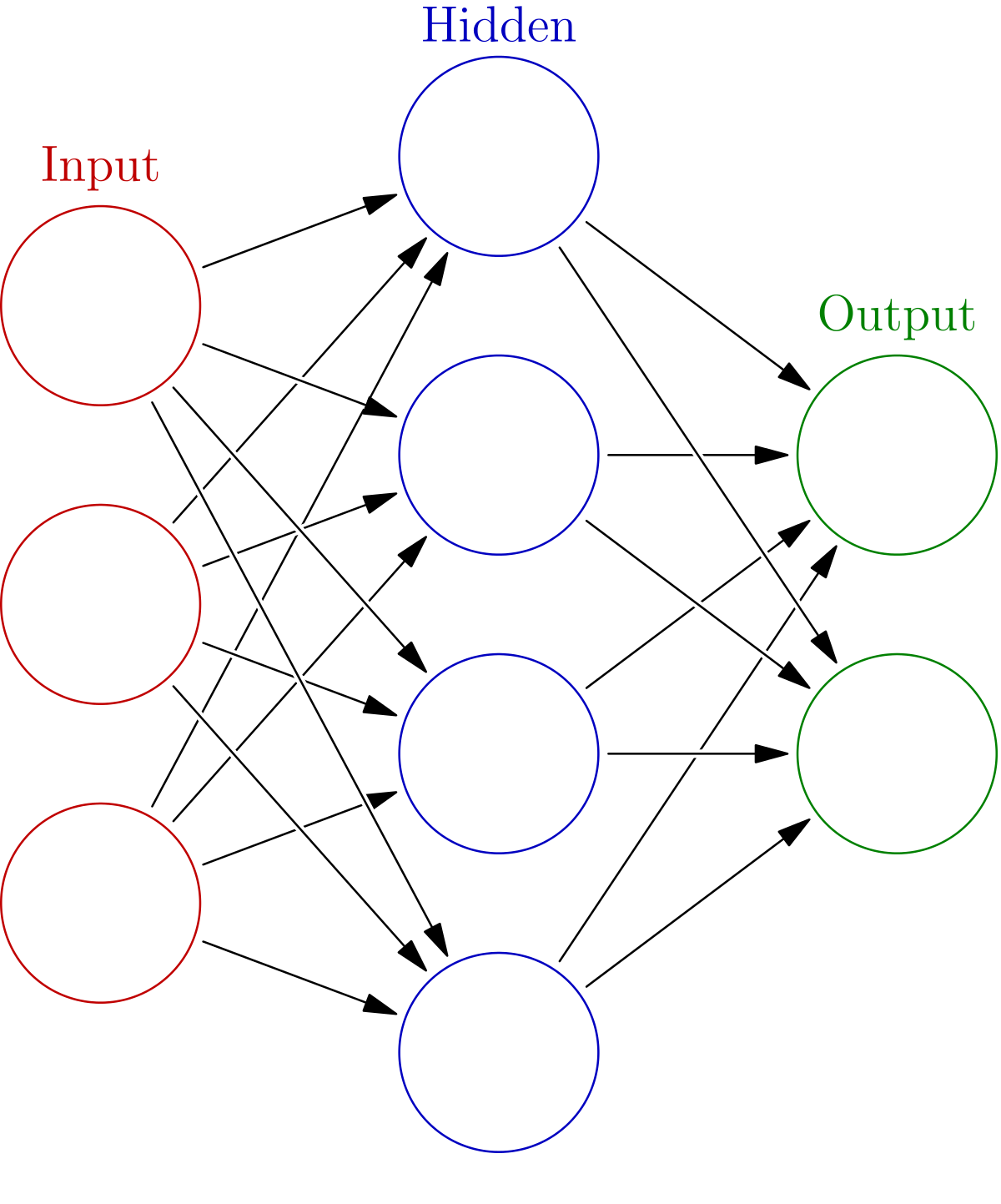

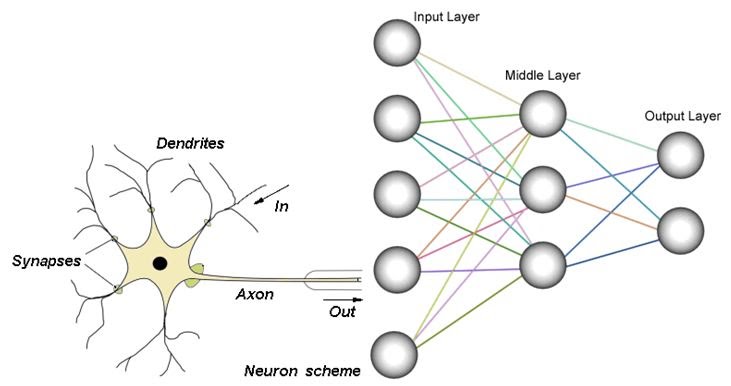

First Raw Data is given to our Input Layer, which is passed on to our Hidden Layer. The Hidden Layer multiplies the input by according weights.

Then The calculated Charge 'X' is fed into our Activation Function, and we get an output 'y'. That output 'y' is the value that is sent out from the Hidden Layer and into the Output Layer (Which has weights of its own!).

Finally the output from the Hidden Layer is compared to the expected value (or desired value) 'Yd'. The Output Layer calculates error by the formula Yd - y (Expected - actual output).

NOTE! That the ANN uses a different activation function known as the Sigmoid function. The Sigmoid function is used here because, unlike the Step and Sign funcs, Sigmoid is considered to be a Soft-Limiter function.

This is because the Sigmoid func has a "range" of truthness. It is NOT binary.